AERA 2026 Annual Meeting Success

This paper was successfully accepted and presented at the prestigious AERA 2026 Annual Meeting on Thursday, April 9, 2026, at the JW Marriott Los Angeles L.A. LIVE. It represented the collective efforts of the AFIRMASI team to highlight critical perspectives on AI ethics on a global stage.

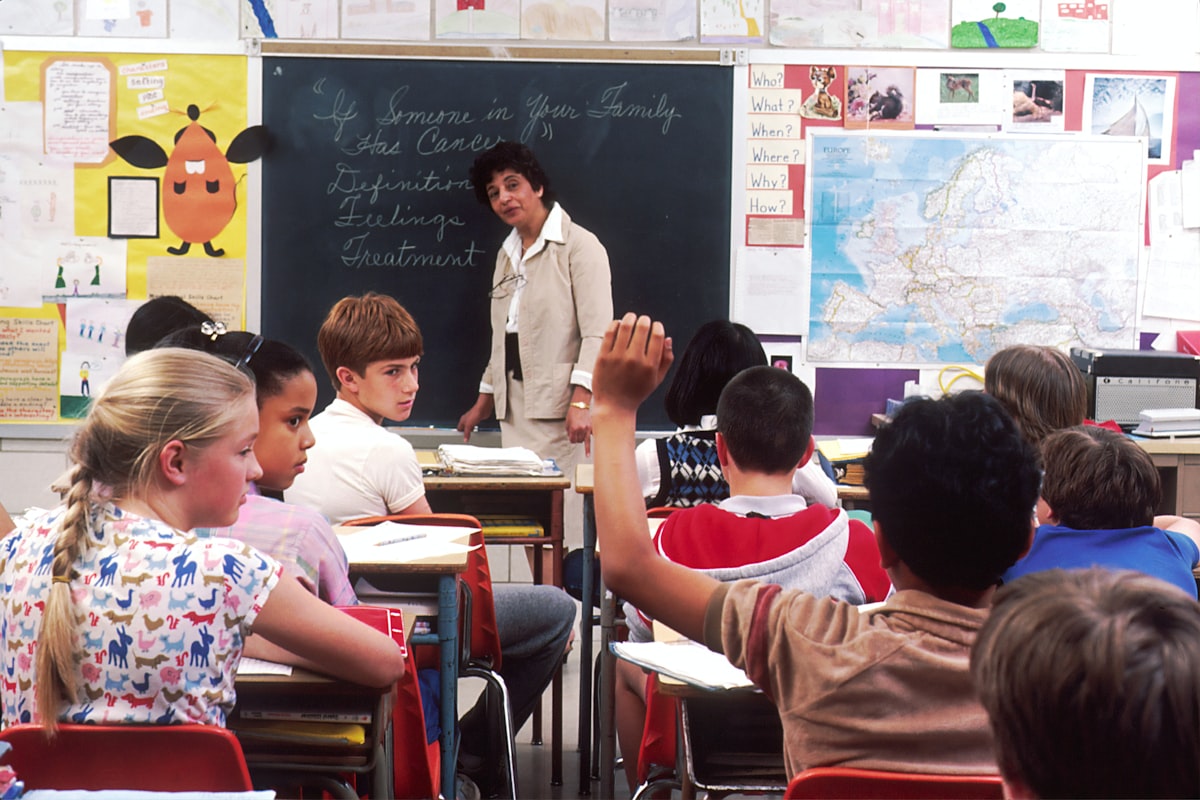

Every classroom is a "figured world"—a socially and culturally constructed realm where students negotiate who they are and who they can become. But these worlds are rarely neutral. They are often saturated with power dynamics and "symbolic violence" that can leave what we call a goresan di hati—a scratch on the heart.

In this paper, I synthesize research on figured worlds and class hegemony to explore how school rituals co-author student identities. By juxtaposing a personal autoethnographic vignette with scholarly literature, I argue that the biases of the past are at risk of being automated by the AI of the future. The essential ethical filter, I contend, is not a better algorithm, but a humanizing pedagogy grounded in critical empathy.

The Ritual of Shame: A Lesson in Social Hierarchy

The theoretical concepts of symbolic violence are best illustrated through lived experience. In one autoethnographic account, a teacher recalls a childhood ritual in Minahasa, Indonesia, called Sumakey—a communal meal. During the event, a teacher publicly shamed a student for the "simple soup" she brought, while praising a wealthier classmate’s "luxurious" grilled carp.

This was more than a comment on food; it was a public valuation of worth. The teacher's words reinforced a class hegemony, devaluing the cultural and economic capital of less affluent families. This "formative wound" stayed with the student for decades, eventually becoming the catalyst for her own commitment to a pedagogy of empathy.

Algorithmizing Bias: The New Symbolic Violence

The danger we face today is that this same biased judgment is being scaled through Artificial Intelligence. If a human teacher can inflict lasting harm through a single biased comment, the potential for an opaque, "black-box" algorithm to do so on a massive scale is alarming.

AI in education is not a neutral tool. It risks replicating historical inequities by favoring data points associated with wealth or privilege. Without a humanizing filter, the system risks simply "algorithmizing" the same symbolic violence that has marginalized students for generations.

Radical Empathy as a Political Commitment

True empathy is not a passive feeling; it is an active, political commitment to justice (Camangian & Stovall, 2022). This research suggests that the experience of past harm can be transformed into a professional identity focused on resistance—a "pedagogy of the heart."

As we integrate AI into our schools, we must prioritize Teacher Agency. Educators must have the power to adapt or override AI recommendations, ensuring that technology serves the flourishing of every learner rather than reinforcing social stratification.

Conclusion: A Call for Human-Centered Ethics

The challenges posed by AI are not entirely new; they are extensions of long-standing issues of bias and power in schooling. This study reaffirms that a humanizing pedagogy is not a luxury—it is an essential prerequisite for any educational technology. We must ensure that our digital futures are filtered through the wisdom of the heart.